A food-secure tomorrow

Harnessing machine learning to create climate-smart crops

By USask Research Profile and ImpactFor the past century, plant breeders have selected the best seeds to grow through a time-consuming process of manually observing and measuring plants in the field.

But what if scientists could train computers to precisely analyze digital images of plants and routinely identify traits related to plant growth, health, resilience and yield?

Crop breeders would be able to more efficiently introduce new, improved varieties, and producers would have the information they need to make reliable crop management decisions (such as how much fertilizer and pesticide to use) during key growth stages to improve yield or respond to environmental changes.

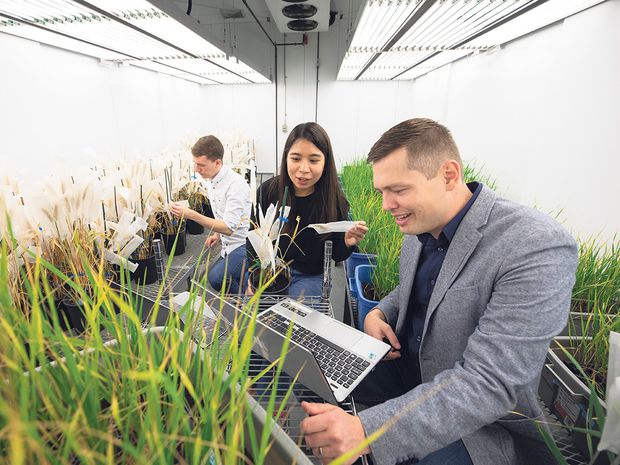

This transformation is underway through innovative research at the University of Saskatchewan (USask). Computer scientists are teaming up with plant scientists, remote sensing specialists and crop breeders to apply machine learning techniques to plant breeding, with a focus on wheat, canola and lentils.

“Science has generated lots of information about the genetics of plants, but tying genes to specific plant traits is the next big challenge,” says USask computer scientist Ian Stavness, who leads the team creating new image analysis tools for identifying desired plant traits (phenotypes) linked to specific DNA variations.

Brought together through the university’s Plant Phenotyping and Imaging Research Centre (P2IRC) at the Global Institute for Food Security, the team is one of only half a dozen in the world doing this type of cutting-edge “computational agriculture” work.

“With enough information to breed for specific traits, such as higher yield or resistance to drought, crop breeders can adapt quickly to changing climatic conditions,” he says. “And if we give farmers better information, then they will be able to grow better crops. A lot of farmers have started to own drones to provide images of their crops, but the issue is: How do you get useful information out of these images?”

Part of the answer is a new software program the team has developed called Deep Plant Phenomics, a reference to both digital phenotyping and “deep learning,” a class of machine learning techniques. Through the software program, a computer can “learn” to recognize an object or a specific pattern within a set of data derived from drone, sensor or satellite images of crops – similar to what recognition software programs can do for human facial features.

“This deep learning software program is a neural network that can be trained by giving it a number of examples,” he says.

How well does the automated approach of machine learning using the new software program compare with manual trait identification in predicting these key crop indicators?

“The software program is about as good as manually counting, and of course it saves a lot of manual work,” says Dr. Stavness. “The data we are producing at P2IRC – the images combined with phenotypic information – constitutes some of the largest and most specific data-sets that exist in the world for plant breeders.”

For USask wheat breeder Curtis Pozniak, who monitors tens of thousands of university research plots across the province, the new software program gives him additional information to improve the precision of selection, reducing site visits and aiding crop development decision-making. Similarly, USask plant breeder Kirstin Bett is applying the new tools to lentil breeding, and Sally Vail, a research scientist at Agriculture and Agri-Food Canada, is using them in canola breeding.

Key plant trait data is recorded through sophisticated non-invasive imaging. Steve Shirtliffe, a USask plant scientist whose research includes state-of-the-art applications of drones for imaging crops, is identifying new plant traits that were previously impossible to measure.

The research is starting to benefit farmers.

“The main benefit so far is that farmers are getting new types of canola and wheat that will produce higher yields in a wider range of growing conditions,” says Dr. Stavness. “Eventually these same data-sets and analysis techniques could be used by farmers who use drones to look at the health of their crops and influence how they manage them during each growing season.”

While some companies provide information such as how healthy crop fields are, the team’s work “provides more precise information down to the specific plant, such as how each individual plant is doing,” Dr. Stavness says.

His team has also developed another software program, PlotVision, which makes it easier for breeders and scientists to get images of individual plots from aerial images captured by drones. The tools have been demonstrated in the computer lab, and efforts are underway to build knowledge among potential users.

“We are on a push to make these open-source tools available around the world,” says Dr. Stavness. “We think our research has a bigger impact if it doesn’t just sit on the shelf but is used by plant scientists both regionally and in other parts of the world.”

The P2IRC research program is funded by a $37.2-million federal Canada First Research Excellence Fund awarded to USask in 2015. “We would not be doing this valuable research without this funding,” says Dr. Stavness.

The team of 10 faculty and six software developers also involves 14 graduate students and two post-doctoral fellows.

P2IRC program director Andy Sharpe, who helped sequence the genome for bread wheat, says P2IRC is leading the way in Canada and beyond in the areas of plant phenotyping and imaging. “The collaborative nature of our work across so many disciplines and our partnerships with academia, government, industry and international development organizations are paving the way for the discovery of new technologies that will contribute to food security in Saskatchewan and around the world.”

This article originally appeared in the Globe and Mail on November 22, 2019.